Maybe Musk's DOGE Isn't the Nightmare I Expected

(But Let's Not Get Too Excited)

When I first heard about the DOGE project, I was ready to throw my laptop out the window. The initial proposal read like a Silicon Valley libertarian fever dream - taking Musk's chaotic "move fast and break democracy" Twitter approach and unleashing it on federal agencies. Great, I thought, just what we need: more tech bros thinking they can "optimize" public institutions into oblivion.

But here's the weird thing - when you actually dig into what DOGE has morphed into, it's basically just USDS with a meme-worthy rebrand. For those not deep in the civic tech weeds, USDS was Obama's attempt to drag government technology out of the 1990s, inspired by the UK's actually-functional GDS program. And let's be real - government tech procurement is an absolute dumpster fire right now. We're talking billions wasted on projects that would make a CS101 student cringe, while the average American has to navigate websites that look like they were designed on GeoCities.

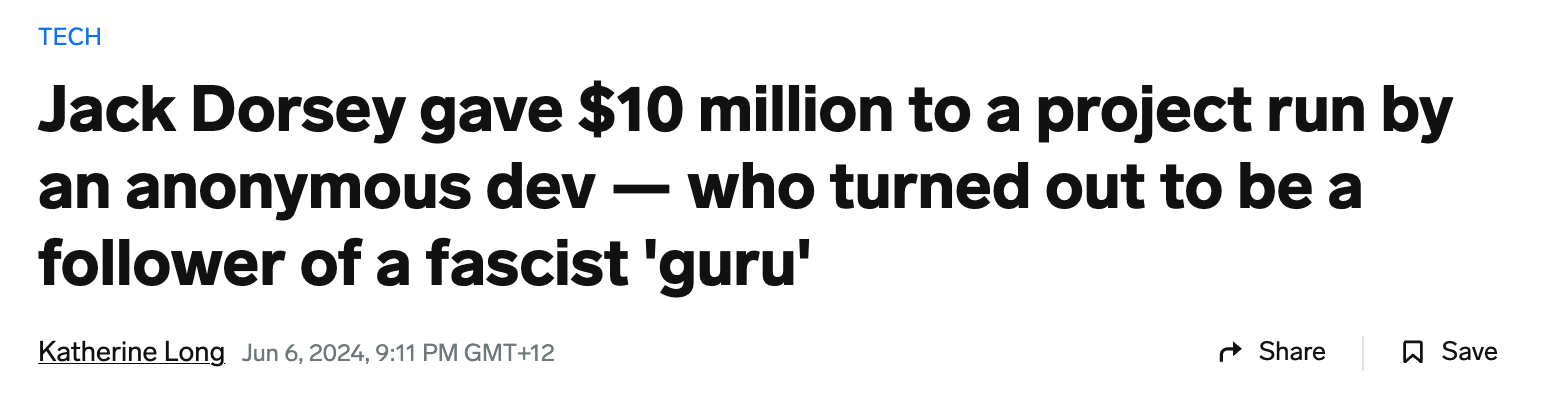

The deeply ironic thing is that Musk - for all his cosplaying as a technocratic messiah following in his grandfather's footsteps of dreaming up an antidemocratic technocratic state - actually has some relevant experience here. SpaceX did figure out how to work with government contracts without producing $500 million paperweights, and Tesla somehow gamed clean vehicle incentives into birthing the first new major car company since we invented radio.

Look, I'm the first to roll my eyes at Musk's wannabe-fascist posting sprees and his perpetual "I'm the main character of capitalism" energy. But speaking as someone who's banged their head against the wall of government technology modernization for years - if he actually focuses on the tech and keeps his brainrot political takes to himself, maybe DOGE could do some good?

Even Jen Pahlka, who basically wrote the book on government digital services, is cautiously optimistic. The services Americans get from their government are objectively terrible, and the procurement system is trapped in an infinite loop of failure. Maybe - and I can't believe I'm typing this - Musk's particular flavor of disruptive tech deployment could help?

Just... please, for the love of all things agile, let's keep him focused on the actual technology and far away from any more attempts to recreate his grandfather's dreams of a technocratic dystopia. We've got enough of those already.